Why AI Automation Strategy Fails: 84% Haven't Documented Their Workflows

Enterprise AI pilots stall when workflows lack the documentation agents need to execute them. Smarsh, Zoom, and Amazon show why structured SOPs are the missing pillar of AI strategy.

Workflow documentation, not model selection, is the missing pillar of AI automation strategy.

Only 6% of enterprises fully trust AI agents on core processes, and unready workflows are why.

Smarsh, Zoom, and Amazon all documented workflows before deploying a single AI agent at scale.

When Smarsh, a communications compliance platform serving regulated industries, decided to deploy an AI support agent across its customer operations, the first question its leadership asked wasn’t which model to use for its AI automation strategy. It was whether the organization had documented how work actually gets done well enough for an agent to execute it.

For a company serving 18 of the top 20 global banks, where a support failure can trigger a compliance event, that question wasn’t philosophical. It was existential.

The answer, unusually, was yes. Years of documentation discipline had already produced what most enterprises lack: clean, structured, governed documentation ready for an agent to act on. The challenge, as Smarsh's leadership framed it, was never about technology:

"How do we harness the knowledge we have internally and present that to these customers in a way that makes our teams more efficient, and customer service more effective?"

— Rohit Khanna, Chief Customer Officer, Smarsh

That question became the design brief. Smarsh named its agent "Archie," connected its internal documentation directly to Salesforce Agentforce, and went to production without stalling during the pilot phase. Self-service adoption reached 59%. Issue resolution accelerated 25%. Service productivity rose 30% without adding headcount.

The unlock wasn’t the model. It was everything that came before it. When executives ask what the 4 pillars of AI strategy are, the answer is consistent across frameworks: data, technology, talent, and governance. While these are the right pillars of AI workflow management, they are also insufficient.

Because underneath every failed pilot and every stalled enterprise AI deployment sits a fifth discipline that most frameworks omit. It’s one that determines whether the other four can function at all: documenting how work actually gets done well enough for an agent to execute it.

That is the discipline Smarsh had before Archie was ever deployed. It is the discipline most enterprises skip entirely. And it is the reason enterprise AI transformation keeps stalling between experimentation and production. It’s not because models are inadequate, not because budgets are too small, but because the documentation foundation that agents depend on was never built.

In this article, we’ll break down how leading enterprises turned workflow documentation into the missing pillar of their AI automation strategy and what that means for scaling AI across the enterprise.

Workflow Documentation Is a Missing Pillar of AI Automation Strategy

Enterprise AI investment is accelerating across industries. AI tools are now embedded in multiple business functions, and experimentation with agents and automation platforms is becoming standard practice. Organizations are investing aggressively, launching pilots, and expanding use cases across teams.

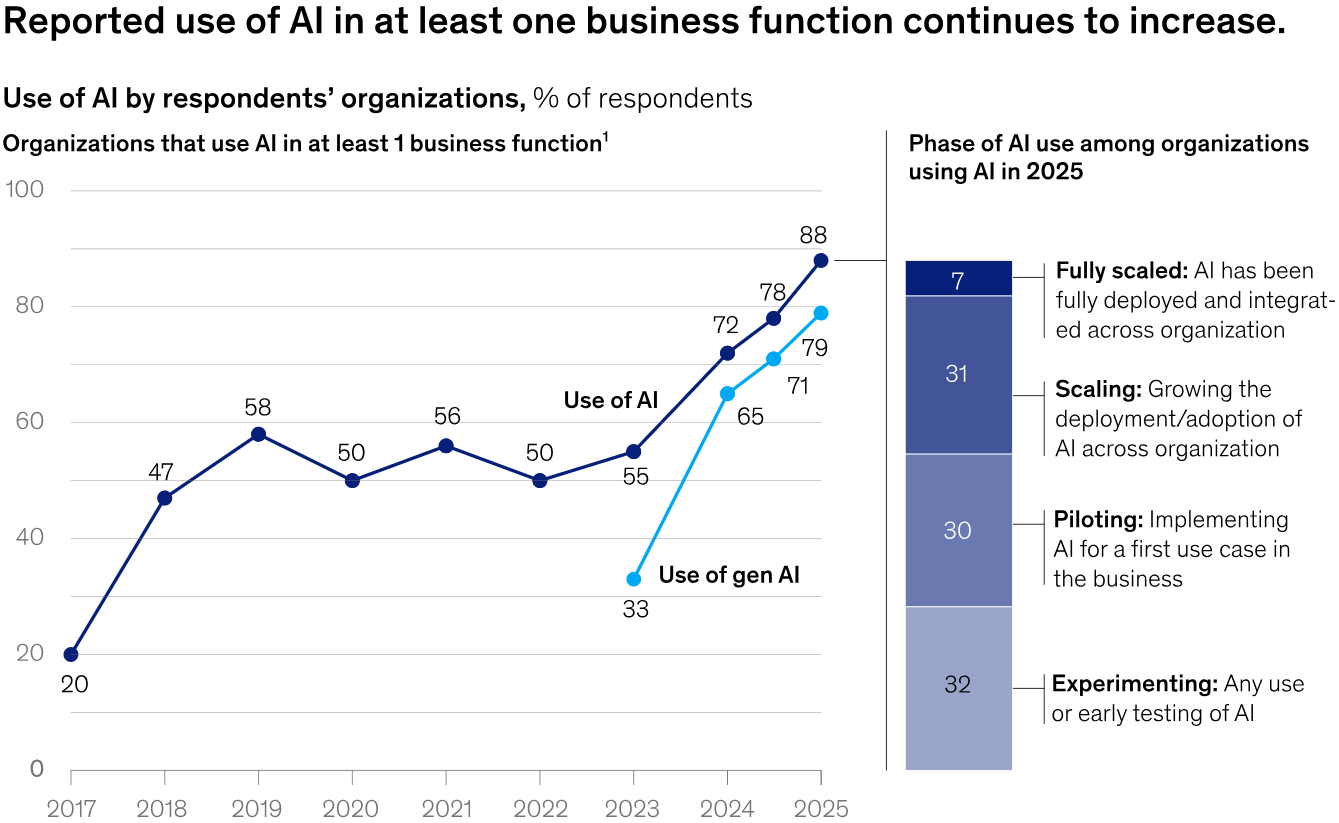

Yet enterprise-level AI impact remains uneven. McKinsey's 2025 State of AI survey clearly captures the gap. As shown in Exhibit 1 of the report, the share of organizations using AI in at least one business function has risen sharply year over year. The adoption curve looks compelling.

But the same research tells a different story at the enterprise level: most organizations haven’t yet begun to scale AI across the enterprise, and fewer than half report any measurable impact on EBIT. Adoption is broad. Depth is not.

Trust in AI automation strategies reflects a similar pattern. Just 6% of organizations fully trust AI agents to autonomously run core business processes. For most, the barrier isn’t access to technology, but readiness to rely on it in critical workflows. Among the top concerns, 31% cite cybersecurity risks, and 23% cite data quality. Close behind, 22% identify unready business processes as a key constraint.

At the operational level, the gap becomes more visible. 84% of organizations have not redesigned jobs or workflows around AI capabilities.

This reflects a broader challenge in AI workflow management, where organizations lack standardized, executable process definitions that systems can follow consistently. As a result, AI systems are introduced into environments not designed for them, limiting their ability to operate consistently across use cases.

For enterprise leaders, the constraint is structural. Without clearly defined and consistently executed workflows, each AI automation strategy operates in isolation, and value fails to compound. Across these patterns, one constraint shows up consistently: the challenge isn’t model capability, but the absence of clearly documented, structured workflows that AI systems can reliably execute.

SOPs Are No Longer Just Documentation. They Are AI Infrastructure.

For decades, standard operating procedures (SOPs) were treated as an operational afterthought. They were created after processes were already in place, documented in static formats, and rarely updated to reflect how work actually happened. In most organizations, they existed as reference material rather than a reliable guide to execution.

That approach breaks down the moment an AI automation strategy enters the picture.

Unlike human teams, AI agents don’t rely on interpretation. They require clearly defined steps, conditions, and rules to perform tasks consistently. This changes the role of SOPs entirely. They are no longer documents that describe work.

They are the product requirement documents that define what agents do, how they respond, and how outcomes are evaluated. In the age of agentic AI, the SOP is the instruction set.

The electricity analogy is useful here. When factories first adopted electric power, most simply replaced steam engines with electric motors and kept everything else the same. Gains were minimal. Real productivity came only when factories were redesigned around electricity rather than bolted onto the old system.

An AI automation strategy follows the same pattern. Organizations adding agents to workflows built for humans aren’t running an AI automation strategy. They are running the same process with a different engine.

The problem of documentation quality compounds this further. Most SOPs reflect what teams believe should happen, not what actually does. They miss real-world variations, exceptions, and edge cases, making them unreliable foundations for systems that depend on precise instructions.

The gap isn’t technical. It is structural. The following three cases show exactly where it shows up in an AI automation strategy.

Where Enterprise AI Automation Strategy Breaks Down in Practice

Model reliability is a real constraint in enterprise AI, but it’s rarely the only reason automation efforts fail. Just as often, the breakdown comes from a more fundamental issue: the underlying work hasn’t been defined clearly enough for systems to execute. When workflows are incomplete or loosely documented, even strong pilots struggle to extend into real operations.

Here are three structural failure modes that limit an enterprise AI automation strategy’s scalability:

- The Pilot-to-Production Gap: Many enterprises show progress in AI experimentation, yet studies show that only 25% have moved a significant share of initiatives into production, with most still stuck between proof of concept and deployment. Pilots often break down when extended to real systems, governance requirements, and cross-functional workflows because the underlying process was never clearly defined or documented in a scalable way.

- Rebuilding Identical AI Automations Across Departments: In many enterprises, different business units develop similar AI automation strategies for segmentation, routing, and personalization without a shared framework for reuse. Each team builds based on its own understanding of a process, because there is no centralized documentation that clearly defines how things should be done. As a result, the same automated workflows are repeatedly recreated across departments, leading to parallel efforts rather than shared infrastructure and slowing enterprise-wide scalability.

- Automation Without Standardization: Many AI initiatives deliver results within a single workflow but are never converted into reusable patterns that can scale across functions or regions. The underlying process may work, but it isn’t documented in a way that others can adopt or execute consistently. Instead, it remains tied to a specific team’s way of working. That’s why most organizations continue to layer automation onto existing processes rather than redesigning them, with 84% yet to restructure workflows around AI. Without standardized documentation, even successful automation stays local and can’t compound across the enterprise.

Next, we’ll take a closer look at three case studies that show how these failure modes appear in real organizations. Each one highlights a specific documentation gap and how addressing it allows an AI automation strategy to move beyond isolated workflows and scale.

How Documented Workflows Took Zoom From AI Pilot to Production

Most enterprise AI deployments in SaaS can respond to questions but can’t complete them. Billing changes, account updates, and order management still require human intervention because the underlying workflows were never defined for end-to-end AI execution.

Zoom encountered this gap as its support needs scaled. Its earlier chatbot handled common queries but couldn’t reliably execute requests involving multi-step backend processes. Resolution still depended on human agents because the workflows behind those interactions weren’t structured for end-to-end execution.

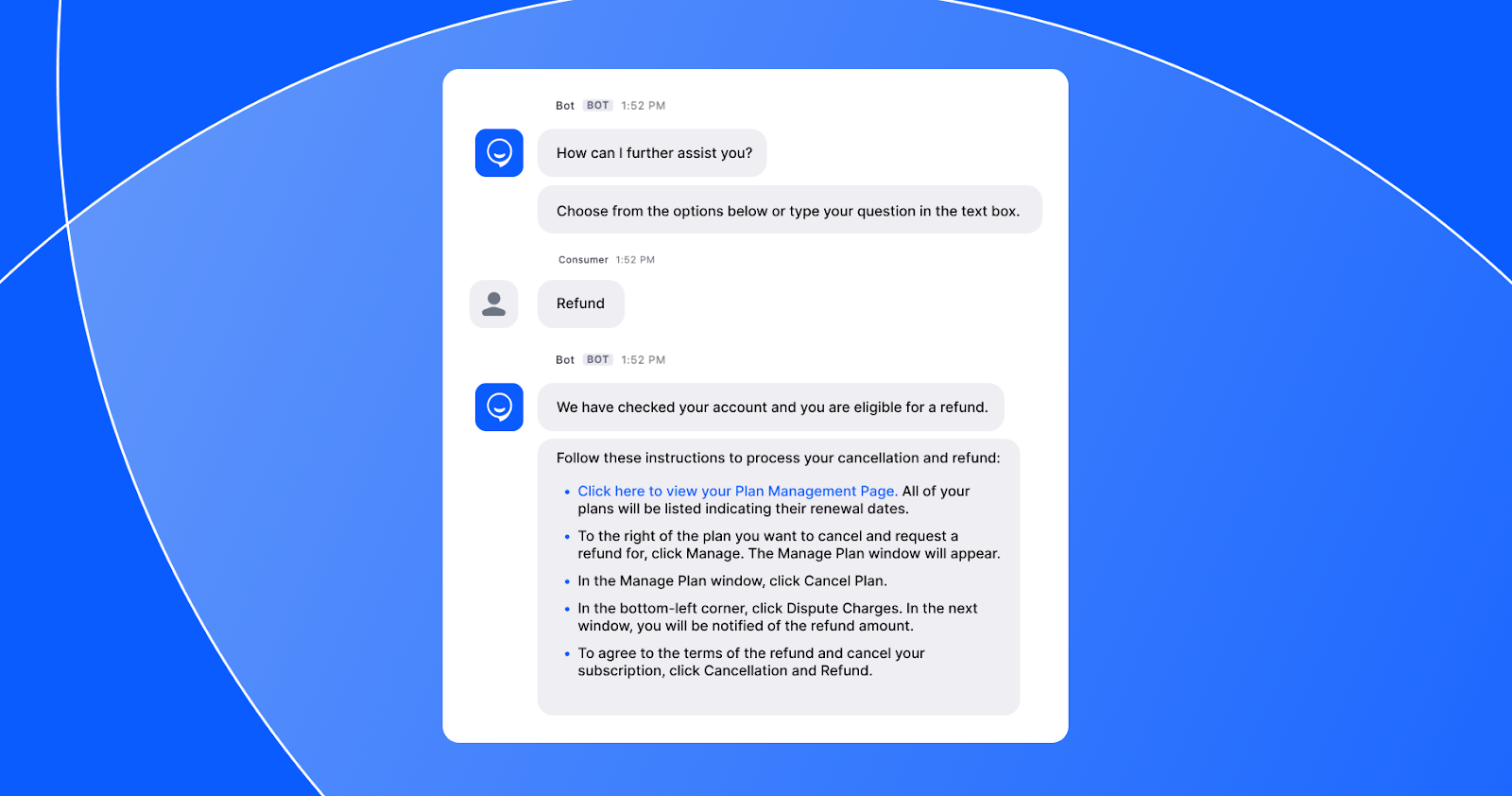

To address this, Zoom moved toward a more structured approach to defining and executing AI support workflows. With Zoom Virtual Agent 3.0, the system can orchestrate multi-step processes across CRM, billing, and service platforms, drawing on a centralized knowledge base and connected data sources to complete actions within a single interaction.

What made this possible wasn’t a better model. It was a better-defined AI automation strategy. As shown in the step-by-step flow example from Zoom Virtual Agent, the system presents customers with a structured, guided sequence of actions rather than an open-ended conversation.

Each step is predefined, each decision point is documented, and the agent executes against that structure rather than interpreting intent in real time. This is what executable workflow documentation looks like in practice.

The shift was from responding to queries to following defined workflows. This transition marks a critical shift in enterprise AI deployment, where systems move beyond surface-level automation to executing fully defined business processes.

“Zoom Virtual Agent 3.0 orchestrates multi-step workflows across systems, continuously learns from human resolutions, and provides full transparency into every agentic action.”

—Chris Morrissey, General Manager of Zoom CX

This transition delivered a measurable impact. Billing deflection increased from 0% to 30% in three months, saving over 1,000 agent hours per month. The no-match rate dropped from 35% to 0%, and overall CSAT improved by 25 points to reach 80%.

Overall, an AI automation strategy moves into production when documented workflows become the instruction set that systems can execute consistently. Improvements in model responses alone don’t make that transition possible.

How Shared SOPs Solved Amazon's Identical AI Automation Strategy Problem

AI development at scale rarely fails because teams lack ideas. It breaks down when the same automation is built repeatedly across the organization, with each team solving similar problems in isolation and lacking a shared way to capture and reuse how work gets done.

At Amazon, this pattern emerged as AI adoption expanded internally. Tens of thousands of builders across AWS, retail, logistics, and research teams began using AI agents for tasks such as code reviews, documentation, and incident response. Each team moved quickly, creating workflows tailored to its own needs, but without a common structure, similar automation logic was rebuilt across functions.

As a result, inconsistencies grew. The same agent produced different results across environments, prompt engineering became a bottleneck, and successful patterns could not be shared across teams. The issue was not capability, but the absence of a structured way to define how workflows should operate.

To address this, Amazon’s internal builder community introduced Agent SOPs, a standardized markdown format for defining AI agent workflows in natural language. Instead of relying on prompts or hard-coded logic, teams began documenting workflows as structured, step-by-step instructions that agents could follow consistently across environments.

These SOPs act like clear instructions for AI agents. They lay out each step, define what must happen versus what is optional, and include the key inputs needed to get the job done. They also track progress and allow work to pick up where it left off. This makes workflows easier to repeat, understand, and use across different systems and situations.

This allows teams to create AI automation strategies faster, share them across functions, and reuse them without rebuilding the same logic. What began as prompt engineering has evolved into a shared operational layer, with thousands of SOPs used across Amazon to reduce duplication at scale.

What Amazon Did When Automation Without Standardization Stalled Global AI Deployment

Demand forecasting in large supply chains rarely fails due to insufficient data. More often, it breaks down when forecasting systems are built separately across regions. Local teams deployed their own models, improving accuracy in isolation, but inventory decisions remained inconsistent across the network.

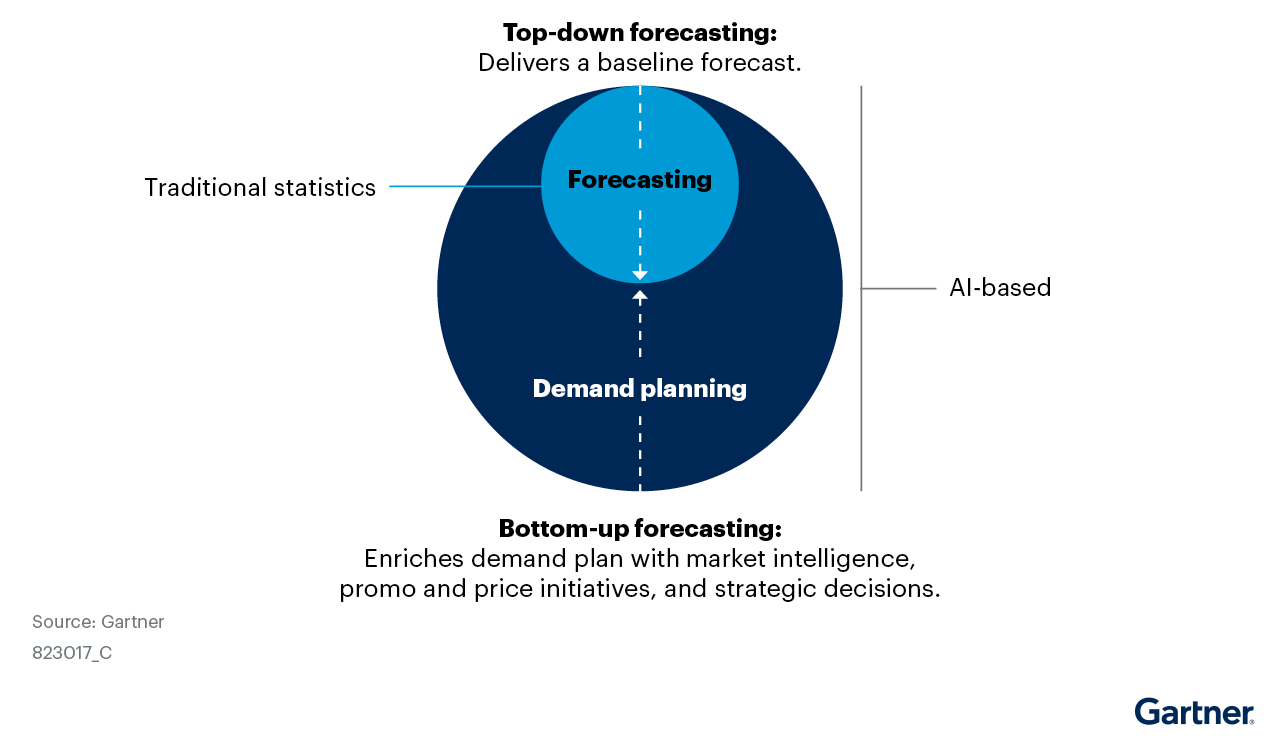

Across the industry, companies are moving toward AI-driven forecasting. By 2030, about 70% of large organizations are expected to adopt AI-based supply chain forecasting to predict future demand. Yet adoption today remains limited because many organizations struggle to redesign planning processes and standardize automation across global operations.

Figure 1 in Gartner's Demand Planning Automation Vision clearly shows the shift. Traditional statistics deliver a top-down baseline forecast. AI adds a second layer underneath, enriching the demand plan with market intelligence, promotional data, and strategic inputs that no statistical model can process alone. Standardization isn’t about replacing existing forecasting. It is about every region executing consistently against the same shared model.

For instance, Amazon faced this challenge while forecasting demand across millions of SKUs and fulfillment centers worldwide. Predicting what customers would need and where to place inventory required coordination at a scale that traditional planning systems could not support.

The company embedded AI forecasting directly into its supply chain execution systems. The models consider factors such as price, convenience, weather, and sales events to predict demand and optimize inventory placement. Rather than producing analytical reports for planners, forecasts now feed directly into same-day delivery and fulfillment systems that execute decisions automatically.

“It allows us to sell a different set of books in Boston than we would in Boise, and cater to different tastes really, really efficiently across the communities that we serve.”

—Nathan Smith, Director of Demand Forecasting, Amazon Supply Chain Optimization Technologies

The operational impact is already visible. AI-enhanced delivery maps guide U.S. drivers through complex routes more efficiently. Forecasting now runs through shared execution systems across the logistics network, so inventory decisions rely on the same underlying models.

By standardizing the forecasting AI automation strategy and embedding it into execution, Amazon removed duplication and turned local AI pilots into solutions that work across regions, allowing automation to scale and deliver consistent results.

Your AI Automation Strategy Begins With Writing It Down

When Smarsh asked whether its documentation was ready for an agent to execute before selecting a model or a platform, that question wasn’t a delay to its AI automation strategy. It was the strategy. Archie succeeded not because Smarsh picked the right technology but because the documentation foundation was already in place before the technology was chosen.

Zoom and Amazon reached the same starting point through different paths. The discipline came before the deployment in every case.

Today, the majority of enterprises are building orchestration layers to connect systems, data, and applications into a unified foundation for AI agents. But orchestration without documented workflows is an empty framework. Without it, enterprise AI transformation remains fragmented, and automation can’t compound across business units.

The challenge is straightforward. Identify one high-value workflow planned for AI automation and ask whether it is documented well enough for an agent to execute it independently.

If the answer is no, that documentation work isn’t a delay to your AI automation strategy. It is your AI automation strategy. Write it down first. Everything else follows.

.avif)

Frequent Asked Questions

What results have enterprises achieved by documenting workflows before deploying AI?

Enterprises that documented workflows before deploying AI have seen measurable operational gains. Smarsh, which had existing data governance discipline and clean, documented workflows, projected a 25% faster issue resolution rate, a 20% increase in self-service, and a 30% gain in service productivity after mapping its processes to an agentic platform. Zoom, after codifying its support workflows as executable process definitions, saw billing team deflection rise from 0% to 30% in three months, saved over 1,000 agent hours per month, and reduced its no-match rate from 35% to 0%.

How do you know if your workflows are ready for AI automation?

A practical test: pick one high-value workflow your team is planning to automate and ask whether the end-to-end process is documented in enough detail that an agent could execute it without asking a human what to do next. If the answer is no, the documentation work is not a delay to your AI automation strategy. It is the strategy. Only 6% of enterprises currently report fully trusting AI agents to autonomously run core business processes, with unready business processes cited as a leading barrier.

What is a SOP in the context of AI agents?

In the context of AI agents, a Standard Operating Procedure (SOP) functions as the product requirement document (PRD) that defines what an agent builds, executes, and optimizes. Rather than improvising, AI agents follow documented operational logic - step-by-step workflow definitions that specify inputs, constraints, and expected outputs. Amazon's internal builder community formalized this approach through Agent SOPs, a standardized markdown format now used across thousands of internal workflows and open-sourced via the Strands Agents framework.

Why do most enterprise AI automation projects fail to scale?

Most enterprise AI projects fail to scale because organizations never document how work actually gets done. Research from Deloitte finds that 84% of companies have not redesigned workflows around AI capabilities. A separate HBR Analytic Services survey identifies unready business processes as the third most common barrier to enterprise AI adoption, cited by 22% of leaders. The constraint is rarely the model. It is the absence of documented, structured workflows that AI agents can execute against.

What is an AI automation strategy?

An AI automation strategy is an organization's plan for deploying AI agents and automated workflows to execute business processes at scale. In enterprise contexts, an effective AI automation strategy goes beyond model selection. It requires documented, structured workflows that AI agents can execute against. Without that documentation foundation, automation efforts typically stall at the pilot stage and never reach production.

.svg)

_0000_Layer-2.png)