Agentic AI in Insurance Didn't Need More Data. It Needed Structure.

The insurance industry scaled agentic AI solutions before anyone else, not because of better technology, but because of a level of structural readiness most enterprises are still trying to build.

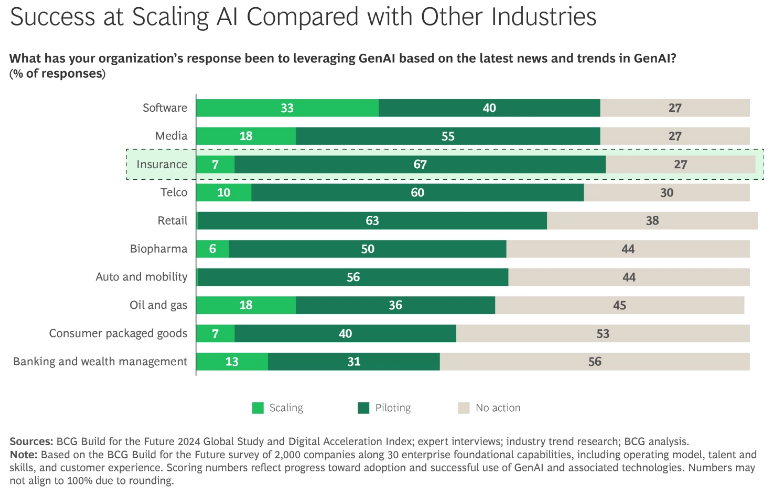

Agentic AI in insurance is leading AI adoption trends, with 67% of insurers piloting by 2024.

In most sectors, nearly 80% of enterprise data is still too unstructured for AI.

Agentic AI scales through structured workflows, not stronger models alone.

On a freezing Saturday night, Brandon Pham’s Canada Goose parka was stolen. Six days later, he opened Lemonade, an AI-powered insurance app, recorded a 61-second video explaining the loss, and submitted his claim.

What happened next looked nothing like a traditional insurance claim. In three seconds, it was approved.

Lemonade’s AI system reviewed the claim, checked it against its policy, ran 18 anti-fraud algorithms, approved a $729 payout, and initiated the bank transfer. No adjusters. No paperwork. No back-and-forth.

That speed didn’t come from just a single AI model. Lemonade had already embedded automation across underwriting, claims processing, fraud detection, and customer support, replacing many traditional insurance workflows with integrated machine learning systems and rules-based decisioning.

A three-second timeline is astonishing in an industry where claims processing can stretch over weeks, and insurers in many markets are legally required to settle claims within 30 to 45 days.

"In the 3,000-year history of insurance, nothing like this has ever happened in 3 seconds. The number to beat is now 3 seconds, and we hope others will rise to the challenge."

—Daniel Schreiber, CEO and Co-founder, Lemonade

What Lemonade demonstrated was more than speed. It was structural readiness. By 2025, BCG ranked insurance at an AI maturity level on par with technology and telecommunications, despite the industry’s reputation for paper-heavy workflows and rigid regulation.

The advantage was not better AI models or larger budgets. Insurers already operate on structured data, rules-based decision systems, actuarial discipline, and audit-ready workflows, the exact foundations agentic AI systems need to scale reliably.

Most enterprises are now trying to build that infrastructure while simultaneously running AI pilots, which is why many of those pilots stall. A major reason is the state of enterprise data itself. The explosive growth of unstructured data, estimated by Gartner to account for between 70% and 90% of enterprise data, has made it difficult for AI systems to operate reliably across enterprise workflows.

The insurers that successfully scaled AI answered a foundational question early: What is the use of agentic AI in insurance if the surrounding infrastructure isn’t ready to support it? Their advantage wasn’t access to different models or more advanced technology. It was the operational structure surrounding those systems.

In this article, we’ll take a closer look at the structural failures that keep most AI initiatives stuck in pilot mode and how leading insurers have built the operational foundations needed to successfully scale agentic AI in insurance.

The Real Reason AI in Insurance Is Scaling Faster

Insurance technology spending is projected to rise by $173 billion in 2026 as insurers move AI from experimentation into core business operations. Investment in AI in insurance is increasingly focused on claims automation, underwriting systems, fraud detection, advanced analytics, and customer experience platforms as carriers modernize core workflows across the enterprise.

Yet progress remains uneven across the industry. BCG’s Build for the Future 2024 Global Study found that while 67% of insurers are piloting generative AI in insurance initiatives, only 7% have successfully scaled gen AI in insurance systems beyond experimentation into enterprise-wide deployment.

The insurers that successfully moved beyond pilots are now reporting productivity gains exceeding 30%, especially in service and operations functions where AI assistants augment employee workflows. These gains are tied to measurable improvements in cycle time, throughput, and operational efficiency.

What separates these companies is the way AI is embedded into workflows, decision systems, and operational structures before scaling begins. Lemonade is a great example of this approach. The company integrated automation and AI in insurance across underwriting, claims processing, fraud detection, customer support, and marketing operations rather than deploying AI into isolated insurance use cases.

Today, Lemonade uses roughly 50 machine learning models to manage 90% of its marketing spend and predict customer behavior, while 98% of sales are handled through chatbots. That same AI in insurance infrastructure also powers claims automation, where many claims are settled in as little as three seconds.

The broader lesson is that the constraint isn’t the technology itself. The primary limitation is the lack of operational structures needed to scale generative AI and agentic AI in insurance.

What Prevents Agentic AI in Insurance From Scaling

So, what is AI in insurance? At its core, it refers to the use of machine learning, automation, and decision systems across claims processing, underwriting, fraud detection, and customer service. While insurers are actively investing in AI, the gap between early experimentation and enterprise-wide value remains significant.

The real challenge lies in the operational issues that prevent agentic AI in insurance from scaling reliably across the business. In fact, we can break it down into three common failure points that explain why many insurers remain stuck at the pilot stage:

- Lack of Structured Workflows AI Can Reliably Operate Within: Most enterprises deploy AI into processes that lack clear routing rules, defined criteria, or structured decision logic. As a result, AI has nothing consistent to act on, and pilots stall before reaching production. This often happens because companies begin with the technology rather than the business problem itself. As Alex Singla, Senior Partner and Global Coleader of QuantumBlack, AI by McKinsey, explains: “One thing we’ve learned: the business goal must be paramount. In our work with clients, we ask them to identify their most promising business opportunities and strategies and then work backward to potential gen AI applications."

- Missing Shared Ownership Across Business and Technology Teams: Even when the technology works, organizational friction prevents AI from scaling. The biggest barriers aren’t technical; they are structural. This means that many teams operate in silos, with technology groups pushing for speed while business units focus on risk, compliance, and customer impact. Such silos cost businesses an average of $3.1 trillion annually in lost revenue and productivity.

- Governance Added After Deployment Instead of Before: Governance is often treated as something to address after deployment, when in reality it determines whether AI can scale at all. Expectations around explainability, bias, auditability, and human oversight are evolving quickly, especially in regulated industries like insurance. Yet only 18% of organizations report having an enterprise-wide responsible AI governance council with decision-making authority.

Next, let’s walk through a few examples of leading enterprises that addressed these structural failures and successfully scaled AI in insurance beyond the pilot stage.

How Aviva Turned 80 AI Models into £60M in Claims Savings

Aviva, the largest general insurer in the UK, faced a structural breakdown in its motor claims operation. These claims were fragmented across multiple teams and channels, with no consistent routing logic or decision criteria. Liability assessments were also slow, and customer complaints were high. The issue was the absence of a unified decision structure governing how claims moved through the system.

Instead of optimizing individual tasks, Aviva made a deliberate shift to redesign the entire claims domain. The leadership team committed to a domain-wide transformation, building shared decision logic that could operate consistently across workflows.

"Aviva's leadership had extreme conviction that, contrary to conventional belief, they could improve customer experience, efficiency, and accuracy in parallel if they adopted a domain-wide approach."

—Sid Kamath, McKinsey Partner

Aviva deployed more than 80 AI models across the claims lifecycle, embedding them into a system governed by clear rules and escalation paths. This allowed the claims journey to move fluidly between digital automation and human intervention, with complex cases, such as personal injury, defaulting to human handlers. AI insurance systems operated within defined boundaries, not as a blanket replacement, ensuring that every decision remained auditable and compliant.

The results were substantial. Liability assessment time for complex cases dropped by 23 days, routing accuracy improved by 30%, and customer complaints fell by 65%, contributing to £60 million in savings in 2024.

The underlying pattern was that Aviva’s gains didn’t come from a single AI model but from redesigning claims operations around structured decision logic, which enabled AI in insurance to operate reliably at scale.

Scaling AI in Insurance Across 70 countries through Shared Ownership

Allianz Group operates at a scale that makes AI deployment structurally complex, with 156,000 employees, nearly 70 countries, 97 million customers, and more than 900 registered AI use cases worldwide.

The challenge wasn’t building models; it was scaling them across dozens of siloed business units, such as motor, property, health, and pet, each with its own workflows, regional constraints, and decision logic. Technology teams could develop solutions, but unless these separate business units trusted and owned them, deployment would stall.

Allianz addressed this by reframing AI as a shared responsibility rather than a centralized technology initiative. Instead of handing solutions down from a core data team, accountability was distributed across business, data science, IT, legal, and privacy functions from the outset.

“AI isn’t managed solely by data scientists; it’s a shared responsibility with accountability across business teams.”

—Manuela Diviach, Head of Group Operations, Organization and Data

This shift ensured that adoption was driven from within operating units rather than imposed from above. The company established the DAITAB, a governance body comprising board members, operating entities, and group functions, positioned above business-unit lines to remove cross-functional friction early.

On the ground, AI systems were developed with continuous user input: Insurance Copilot, launched in 2024 for automotive claims in Austria, was refined through claims-handler feedback before expanding into other domains.

"Insurance Copilot is like having extra colleagues in the claims department helping adjusters navigate complex cases with ease and speed. The Copilot lets them ask questions and get precise answers without wasting time."

—Ali Riza Savas Saral, Head of Insurance Automation at Allianz Technology.

Such efforts were reinforced by large-scale change programs, including a global AI run that reached over 150,000 employees and data literacy initiatives that supported tens of thousands more.

The results reflect both scale and consistency. For instance, in Germany, 49.7% of pet insurance claims were fully automated in 2025, with simple cases paid out in hours. Similarly, in Australia, the processing time for food spoilage claims dropped from around seven days to under one day.

Behind these outcomes is a governance model that predates regulatory pressure. The DAITAB and tools such as the AI Value Canvas ensure that every deployment has a clear business case and defined ownership before scaling begins.

In other words, Allianz solved fragmentation by redesigning ownership. Once accountability was shared and governance sat above business-unit silos, adoption of gen AI in insurance followed.

How Manulife Scaled AI in Insurance Faster by Building Governance First

For Manulife Financial Corporation, the challenge wasn’t introducing AI, but scaling it consistently across a global business spanning 37,000 employees, 109,000 agents, and 36 million customers across Canada, Asia, Europe, and the United States (where it operates as John Hancock). Each market brought different regulatory expectations around explainability, bias, privacy, and auditability. While many insurers slowed deployment, waiting for clearer standards, Manulife moved in the opposite direction.

Instead of delaying, the company treated governance as a prerequisite for AI deployment. It established responsible AI principles, covering explainability, fairness, and human oversight, before regulators formalized requirements. These principles were operationalized through expanded model risk frameworks, vendor vetting processes, and cross-functional oversight spanning legal, compliance, data science, and business teams.

“For every dollar we are investing in deploying AI solutions, we are also investing in AI safety.”

—Jodie Wallis, Global Chief AI Officer, Manulife

Governance wasn’t layered on after deployment; it defined what could be deployed in the first place. This approach extended into production systems.

Every AI in insurance use case was reviewed for ethical and privacy risks before scaling, and continuous monitoring was built directly into deployment, tracking accuracy, drift, and usage in real time. Models were adjusted or removed as soon as they failed to meet defined thresholds. MAUDE, Manulife’s AI underwriting engine, reflects this philosophy: explainability and auditability were designed into its architecture from the start, rather than being retrofitted later.

The impact was acceleration. Between 2016 and 2023, Manulife deployed roughly 70 AI in insurance use cases. After embedding governance into its operating model, it deployed 140 AI in insurance use cases in 2024 and 2025 alone, with hundreds more planned. For instance, MAUDE now automatically approves 58% of eligible life insurance applications, delivering decisions in as little as two minutes.

Globally, digital initiatives generated over $600 million in benefits in 2024, with AI expected to deliver a threefold return by 2027. Overall, Manulife didn’t wait for regulatory certainty. It built governance strong enough to meet requirements before they existed, and in doing so, turned compliance from a constraint into a scaling advantage.

Why Infrastructure Matters More than Models for Agentic AI in Insurance

Lemonade was able to approve and pay a stolen parka claim in three seconds because the infrastructure supporting its AI in insurance systems was already in place before deployment began.

That is the larger pattern across AI in the insurance industry. Companies that moved beyond pilots did not succeed because they had better models. They succeeded because they built the operational structure needed to make AI reliable, repeatable, and scalable across the business.

The gap between experimentation and production remains. In 2025, 42% of companies abandoned most of their generative AI initiatives, up from 17% the year before. On average, organizations scrapped half of their AI proofs of concept before reaching production.

Before your next board cycle, pull your three largest active AI initiatives and ask a simpler set of questions. Is there structured decision logic at every step, or is AI operating within workflows with nothing concrete to act on? Is AI owned solely by a technology team, or is accountability shared across the business units that ultimately have to trust and use it? Was governance built into the system before deployment, or is compliance being retrofitted around models already in production?

If any answer is no, the issue isn’t the model, the data, or the investment. It is the absence of infrastructure that makes those things deployable at scale.

.avif)

Frequent Asked Questions

What results are leading companies seeing from AI in insurance?

Leading insurers are reporting measurable gains in efficiency, cost reduction, and customer experience. Aviva saved £60 million in 2024 after redesigning claims workflows around AI-driven decision systems. Manulife generated more than $600 million in digital benefits in the same year, while its AI underwriting engine now automatically approves 58% of eligible life insurance applications in as little as two minutes.

Where does gen AI in insurance most commonly fail to scale?

Generative AI in insurance often stalls because organizations deploy models before building the operational structure needed to support them. Common barriers include unstructured enterprise data, disconnected business and technology teams, unclear decision workflows, and weak governance frameworks. As a result, many AI pilots never progress into enterprise-wide deployment.

How is generative AI in insurance being used today?

Generative AI in insurance is being used across claims handling, underwriting, customer service, fraud detection, and internal operations. Insurers are deploying AI assistants to support employees, automate document processing, summarize claims data, and improve customer interactions. Companies like Manulife and Allianz are also embedding AI directly into underwriting and claims workflows to speed up decisions and reduce manual processing.

Which industries lead in AI adoption today?

Insurance has emerged as one of the leading industries for enterprise AI adoption, reaching an AI maturity level comparable to technology and telecommunications, according to BCG’s 2025 analysis. The industry’s advantage comes from decades of structured data, rules-based workflows, and compliance systems that created a strong foundation for scaling AI across operations.

What is AI in insurance, and why does it matter?

AI in insurance refers to the use of machine learning, automation, and decision systems across claims processing, underwriting, fraud detection, customer service, and risk assessment. It matters because insurers are using AI to reduce processing time, improve operational efficiency, lower costs, and deliver faster customer experiences. Lemonade, for example, processed and paid a claim in three seconds using automated claims and fraud detection systems.

What is agentic AI in insurance, and how does it differ from standard AI?

Agentic AI in insurance refers to AI systems that can make decisions and take action within structured workflows with minimal human intervention. Unlike standard AI, which typically generates predictions or recommendations for employees to review, agentic AI can route claims, trigger approvals, escalate complex cases, and execute operational tasks autonomously within defined rules and governance frameworks.

.svg)

_0000_Layer-2.png)