Shadow AI Isn’t an Employee Issue. It’s a Demand Signal.

Shadow AI isn't a rogue employee problem; it's what happens when AI demand outpaces governance. See how JPMorgan, Microsoft, and Guardian Life turned that signal into strategy.

69% of C-suite leaders are comfortable with unsanctioned AI, modeling a core issue: placing speed above governance.

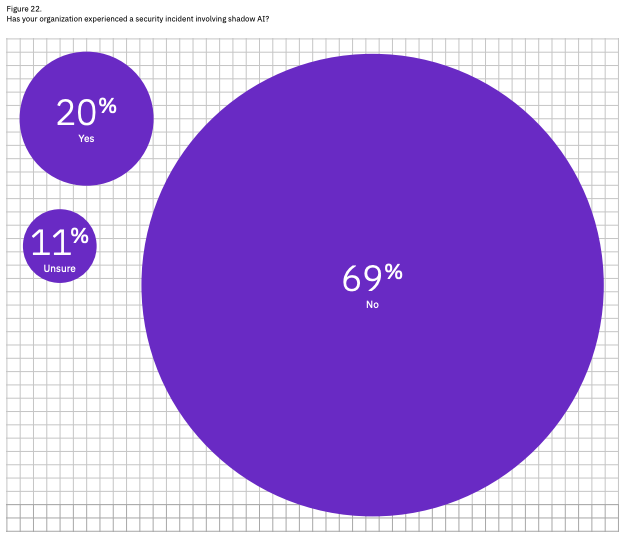

One in five organizations has already experienced a breach traced directly to the use of shadow AI.

JPMorgan onboarded 200,000 employees to a sanctioned, governed AI program in 8 months.

In March 2023, engineers at Samsung's semiconductor division were responsible for three major data leaks over twenty days. Engineers had fed proprietary source code into a chatbot, another uploaded internal code, a third recorded a confidential meeting, transcribed it, and fed the text into ChatGPT.

All of them were simply trying to do their jobs faster, but the consequences of shadow AI risks can be immediate and irreversible. Samsung's source code was now technically in OpenAI's possession, with no mechanism to retrieve it. Samsung immediately banned ChatGPT across all company devices and began building its own internal tools.

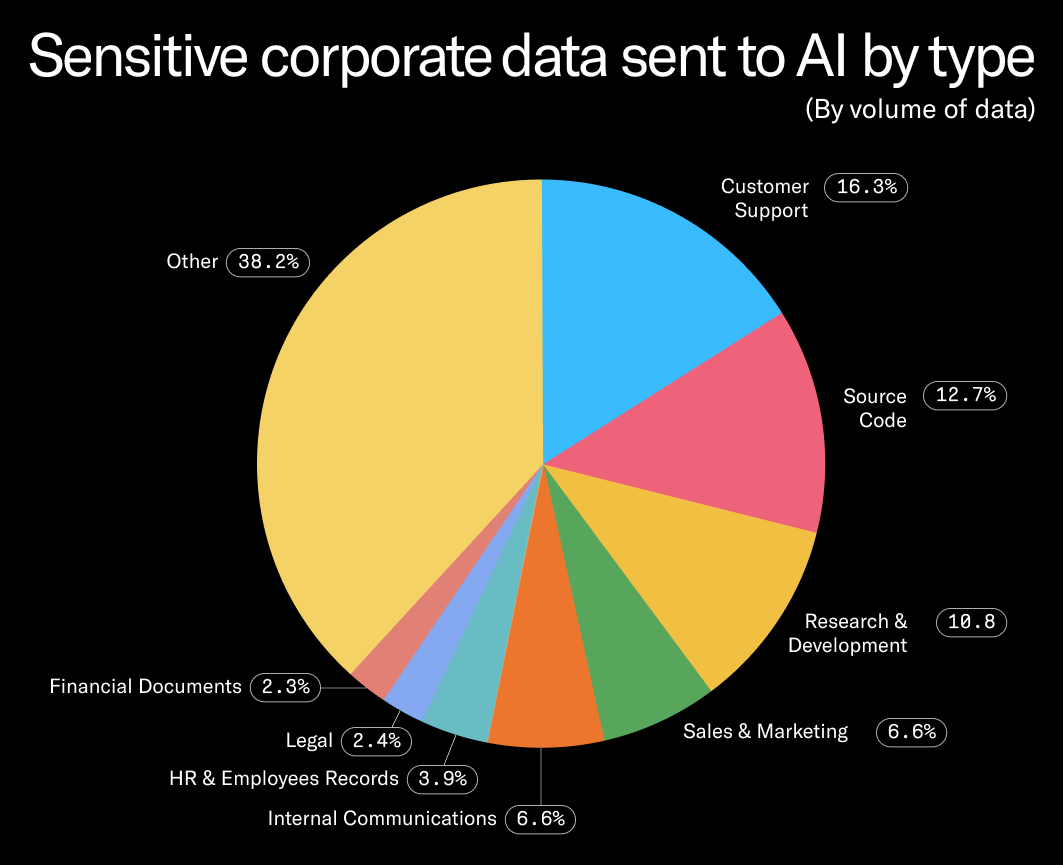

This is what’s commonly referred to as “shadow AI,” the unauthorized use of AI tools by individuals and teams within organizations. According to research by Cyberhaven, an AI and data security platform, legal data, HR & employee records, source code, and research and development account for over 25% of the sensitive data sent to AI.

Samsung is far from the only company with a cautionary tale about public shadow AI. An Amazon lawyer sent an internal Slack message warning employees that the company had already seen ChatGPT-generated text that closely resembled internal company data, the result of engineers using it as a coding assistant for proprietary code. And in October 2025, a contractor for the New South Wales Reconstruction Authority (Australia) uploaded an Excel spreadsheet containing over 12,000 rows of flood victim data, including names, addresses, phone numbers, and personal health information, inadvertently exposing data from as many as 3,000 people.

Shadow AI isn’t a story about bad actors. Most CIOs treat shadow AI as a security threat to be eliminated. But the data tells a different story: shadow AI is a demand signal, not just a vulnerability. The companies that channel it into governed environments gain both the productivity gains employees were chasing and the oversight boards require.

Why Is Shadow AI a Growing Issue?

Shadow AI security risks are real and accelerating. A January 2026 survey from AI security firm BlackFog found that 49% of workers admit to using AI tools without employer approval. A 2025 KPMG global study of more than 48,000 people across 47 countries found that 57% of employees hid their AI use from employers and presented AI-generated work as their own. Only 40% of workplaces had any policy or guidance on generative AI. IBM's 2025 Cost of a Data Breach Report found that one in five organizations experienced a breach due to shadow AI, 63% lacked governance to prevent shadow AI from spreading, and that high shadow AI usage added as much as $670,000 to breach costs.

Shadow AI governance is crucial even when using a tool with built-in guardrails. For example, Microsoft 365 Copilot, when properly configured, offers tenant-level data isolation, respects existing permissions, and other built-in protections. But shadow AI can still persist outside of sanctioned tools, and these features are not a substitute for governance, policy enforcement, and user oversight.

The rise of shadow AI is the predictable result of a gap between organizational readiness and workforce urgency. KPMG’s study found that half of employees reported feeling pressure to use AI out of fear of being left behind, and in most workplaces, employees made their own decisions about which tools to use and what data to share. The responsibility for creating appropriate governance frameworks for AI tools ultimately lies with leadership.

Why Most Approaches to Shadow AI Miss the Mark

Many organizational responses to shadow AI are failing in three distinct ways:

- A real AI program is expensive. Most organizations are underfunding it. The true cost of enterprise AI extends well beyond the model license: data quality, system integration, governance infrastructure, and workforce training all carry significant budget implications that organizations routinely discover too late. Shadow AI flourishes in the gap between "we have a tool" and "we have a program,” and closing that gap costs more than most AI budgets currently account for.

- Banning backfires. Many employees will continue using personal AI accounts even after an organizational ban. Prohibition does not eliminate demand; it just makes the behavior less visible with no policies or guardrails in place.

- Leadership is modeling the problem. The most alarming finding in the BlackFog data? 69% of presidents and C-suite members, and 66% of directors and senior VPs, said they are comfortable with unsanctioned AI use, prioritizing speed over governance. A governance program that leadership does not model is a governance program that does not work.

How Microsoft Made Shadow AI Visible

Even organizations that have deployed a sanctioned AI tool find that shadow AI persists. Employees who have been using personal accounts for months do not stop because IT deployed something new; a tool without surrounding governance infrastructure, training, and detection capability is not a program. Microsoft's approach addresses this at the infrastructure layer rather than the policy layer.

Edge for Business, integrated with Microsoft Purview, analyzes AI prompts in real time at the browser level. When an employee attempts to submit sensitive data to an unsanctioned AI tool, the action is audited or blocked immediately before the data leaves the organization's control. This closes the gap that policy alone cannot: the moment between intent and exposure.

How JPMorgan Created an Opt-in Strategy

JPMorgan Chase demonstrates what it looks like to treat shadow AI as a demand signal rather than a threat. Banning unsanctioned tools without providing a credible alternative is a governance dead end, and for a bank with $4.4 trillion in assets and strict regulatory obligations, unsanctioned AI data flows were an existential risk. Instead of a ban, JPMorgan's answer was a better alternative.

In the summer of 2024, JPMorgan launched LLM Suite, a proprietary generative AI platform built entirely in-house. The platform is model-agnostic, connecting employees to approved large language models from multiple providers while keeping all data within the bank's own compliance infrastructure. Lawyers use it to analyze contracts. Investment bankers use it to build decks; work that previously took junior analysts hours now takes roughly 30 seconds. Engineers use it for code review.

The rollout reached 200,000 employees within eight months, driven by an opt-in strategy that Chief Analytics Officer Derek Waldron describes as creating "healthy competition, driving viral adoption." The bank estimates up to $1.5 billion in annual value from its AI initiatives, with employees reporting 30–40% efficiency gains.

"We wanted to enable generative AI technology for the firm, but we wanted to do so in a very safe and secure way that made sure that we were able to understand the data lineage."

— Derek Waldron, Chief Analytics Officer, JPMorgan Chase

Shadow AI detection at JPMorgan was ultimately solved not by surveillance but by irrelevance. When the sanctioned tool is genuinely better than what an employee could find on their own, the demand signal gets channeled rather than suppressed.

Guardian Treats Governance as a Maturity Problem

The MIT CISR (Center for Information Systems Research) reframes the lack of appropriate AI governance and leadership buy-in as an organizational maturity problem, not a policy problem. When senior leaders tolerate unsanctioned AI use, they signal to the entire organization that governance is optional. The MIT CISR Enterprise AI Maturity Model, based on a survey of 721 companies, shows exactly what that signal costs.

The MIT CISR Enterprise AI Maturity Model, based on a survey of 721 companies, maps four stages of AI maturity to financial performance. Enterprises at Stage 3, where AI ways of working are scaled across the organization, outperform their industries by 11.3 percentage points on growth and 8.7 percentage points on profit above the industry average.

The jump from Stage 2 to Stage 3 is where most enterprises stall. The 2025 MIT CISR update identifies stewardship as the critical differentiator: embedding compliant and transparent AI practices by design, not as a compliance checkbox applied after the fact. Guardian Life Insurance is among the companies MIT CISR cites as actively navigating this transition.

The implication for shadow AI is direct. Organizations stuck at Stage 2 treat governance as a constraint on adoption. But organizations moving toward Stage 3 treat it as the mechanism that makes scaling possible. Building that governance architecture before incidents force the issue is the difference between leading on AI and cleaning up after it. Shadow AI is most entrenched where governance is treated as an afterthought, where senior leaders have not yet connected their own tolerance of unsanctioned tools to the maturity ceiling it creates.

What Should Your Organization Do About Shadow AI Risks?

The Samsung engineers who caused the breach at the top of this piece were not the problem. The same is true of the Amazon employees whose AI-assisted code outputs resembled proprietary data, and of the NSW contractor who uploaded 12,000 rows of flood victim records to a public chatbot. In each case, the organization had not given employees a better path.

The CIOs gaining ground on this have stopped asking how to stop employees from using unsanctioned AI and started asking a better question: Are our governed tools genuinely good enough? Microsoft's infrastructure makes shadow AI visible before data leaves the building. JPMorgan reached 200,000 users in eight months by building something employees preferred. Guardian Life and others are learning that governance embedded by design should be the default.

If your executives are among the 69% comfortable with unsanctioned AI, your governance program has a credibility problem that no policy document will fix. Shadow AI is a demand signal. The organizations reading it correctly are building programs; the ones ignoring it are building liability.

.avif)

Frequent Asked Questions

How can organizations reduce shadow AI risks without killing productivity?

The most effective approach is building a governed alternative that employees genuinely prefer. JPMorgan Chase onboarded 200,000 employees to its in-house LLM Suite within eight months by making it more capable and better integrated than any external tool. Organizations that pair a strong sanctioned tool with a clear governance policy and detection infrastructure see significantly better outcomes than those relying on bans.

How does shadow AI detection work?

Shadow AI detection typically combines network monitoring, browser-level controls, and SaaS usage auditing to identify unsanctioned AI tools. Microsoft's Edge for Business with Purview integration, for example, analyzes AI prompts in real time and blocks or audits sensitive data submissions before they leave the organization's control.

Why do employees use shadow AI even when it's banned?

Employees use shadow AI to stay competitive because approved alternatives are inadequate, absent, or poorly communicated. Shadow AI fills the gap left by underfunded or ungoverned enterprise AI programs.

What are the biggest shadow AI security risks for enterprises?

The primary shadow AI security risks are unauthorized data exposure, regulatory violations, and IP loss. IBM's 2025 Cost of a Data Breach Report found that shadow AI adds as much as $670,000 to breach costs and was responsible for breaches at one in five organizations surveyed.

What is shadow AI?

Shadow AI refers to the use of artificial intelligence tools by employees without employer authorization, IT oversight, or governance controls. It typically involves consumer-grade tools like ChatGPT being used for work tasks involving sensitive data, outside any sanctioned enterprise AI program.

.svg)

_0000_Layer-2.png)